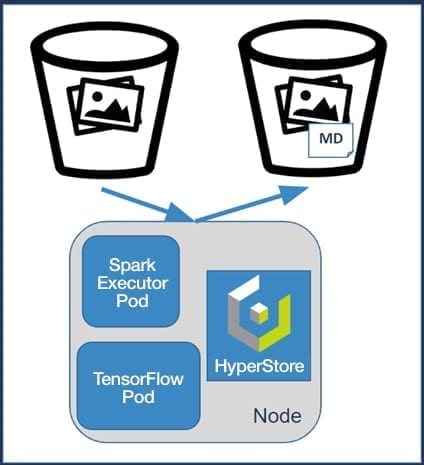

Object storage is known for its scalability and easy-to-use S3 APIs, but to make that object data useful for analytics, metadata about the objects sometimes needs to be added. This article describes a case study of adding and then using metadata of S3 objects. Starting with images stored in Cloudian HyperStore object storage, we use a TensorFlow machine learning model to identify what’s depicted in the image, then attach those labels to each image as S3 metadata, and finally automatically index and search the object metadata using ElasticSearch and Kibana.

INPUT

Unlabeled images stored in HyperStore S3 bucket.

OUTPUT

Images with metadata of labels of what’s in the image stored back in HyperStore and ElasticSearch.

METHOD

Use a TensorFlow deep learning model to determine labels of what’s in the image.

Use HyperStore’s ElasticSearch plugin to make metadata searchable and visualizable.

In an S3 bucket named “images,” we upload about 300 images of common items, including animals, vehicles, and household goods. Using an object store for a collection of images, it’s very convenient to store a large amount of data easily and economically.

By locating the analytics/computation processing close to the data, HyperStore takes advantage of the data locality and an edge-hub topology for efficient and timely processing. It’s fast, with processing as close as possible to where the data is generated; cheap, with minimal network transfer costs for an upload and subsequent downloads, and secure because the data can be kept private and protected.

The image recognition process reads each object from the S3 bucket and calculates the image classifications by applying the TensorFlow model. The S3 list-objects API is used to iterate over each object in a bucket. For each object, checks are first done, including confirming the Content-Type is an image and the size is not above a threshold. The image is then scaled to a fixed size, and the model is executed based on the TensorFlow’s LabelImage class. The TensorFlow model used for image recognition is the pre-trained Inception 5h that recognizes 1,000 classes of images from ImageNet.

Below are examples of input images and the resulting classification outputs as a label and associated probability after the image recognition process runs.

2925[main] INFO com.cloudian.hap.LabelImage images/fox.jpg:

red fox (58.52% likely)

kit fox (39.54% likely)

coyote (0.73% likely)

grey fox (0.71% likely)

red wolf (0.25% likely)

5095 [main] INFO com.cloudian.hap.LabelImage images/iphone.jpeg:

cellular telephone (41.24% likely)

hand-held computer (40.34% likely)

pay-phone (7.52% likely)

iPod (3.88% likely)

remote control (1.54% likely)

Some configurations to control the classifier:

The image labels and their associated probabilities are added to the object using S3 user-defined metadata where the key is the prefix “imgtag_” plus the label (e.g., “red fox”) and the value is the associated probability (e.g., “0.59”). The label is URL-encoded to ASCII to conform to the metadata key requirements, notably the <SPACE> character is converted to ‘+’. To update an existing object’s user-defined metadata, the S3 Copy Object API is used with the x-amz-metadata-directive: REPLACE header. The object and its metadata are now stored in HyperStore S3. This example with a S3 GET command on bucket “images” and object “fox.jpg” shows the user-defined metadata output:

HyperStore has the capability of indexing object metadata in ElasticSearch. Once in ElasticSearch, Kibana can be used for data exploration.

Here’s an example query to find all images where the label “kit fox” has probability greater than 0.4. The Kibana query is bucketname:images AND userMetadata.imgtab_kit+fox>0.4 that returns 2 objects:

If you don’t care what type of “fox” it is, you can use wildcards in the Kibana query bucketname:images AND userMetadata.imgtag\*fox\*:* that returns 13 objects:

S3-compatible object stores like HyperStore have enabled storing PBs of data. These tools provide a convenient way to move the compute to the data and, as in this use case, to add metadata to the object data. In the same spirit, we are developing more use cases to enhance object storage analytics, including processing streaming data and other machine learning tasks.