One exciting feature of Cloudian’s new 7.5.1 release is this: application-aware hybrid storage policies. This feature allows HyperStore to boost performance by dynamically adapting storage policies to the workload. The result is faster response for small object access. Furthermore, the increased efficiency in handling small objects boosts overall performance for all object sizes. This is not tiering, but rather a smart implementation of storage management policies. Read on to learn more about how it works.

How object storage protects data

Object storage systems distribute objects across a distributed infrastructure, allowing for high availability, durability, and efficient data retrieval. HyperStore, as an object storage system, is built to be highly scalable and capable of storing and handling exabytes of data. Object storage systems store data as discrete units called objects, accessed over an HTTP network connection via an S3 API call. Objects are securely managed through unique identifiers and access/secret keys. Object sizes can range from bytes to terabytes, depending on the requirements of the application or use case.

Object storage systems distribute objects across a distributed infrastructure, allowing for high availability, durability, and efficient data retrieval. HyperStore, as an object storage system, is built to be highly scalable and capable of storing and handling exabytes of data. Object storage systems store data as discrete units called objects, accessed over an HTTP network connection via an S3 API call. Objects are securely managed through unique identifiers and access/secret keys. Object sizes can range from bytes to terabytes, depending on the requirements of the application or use case.

The size of an object can affect certain aspects of its storage and retrieval. For example, larger objects may take longer to upload or download due to the amount of data involved. During data transfer, they may also require more storage space and impact network bandwidth. Smaller objects may have inherent overhead when being stored and managed within the object storage system. If you need to retrieve many small objects sequentially, the overhead of individual requests may impact the system’s overall performance. Hence, it is essential to optimize your application or use case accordingly.

Replication factor vs erasure coding

One of the ways to optimize the workflow and storage layer is by selecting the most appropriate storage policy. The choice between Replica Factor (RF) and Erasure Coding (EC) storage policies for object storage depends on several factors, including your specific requirements for data durability, storage efficiency, and performance. Each approach offers high data durability and availability since each replica or distributed fragment provides redundant protection in case a node or hardware failure occurs. However, the primary considerations regarding object size are Performance and Storage Efficiency.

Replica Factor (RF) is a storage policy that involves creating multiple copies, or replicas, of each object and distributing them across different storage nodes. The use of RF can offer better read performance since multiple copies of an object are available across different storage nodes, allowing for parallel retrieval of the object data. RF stores an equivalent size copy of the object across nodes which consumes more storage space compared to EC thus lowering the storage efficiency.

Erasure Coding (EC) is a storage policy that uses mathematical algorithms to split an object into data fragments and additional parity fragments distributed across different storage nodes in the object storage cluster. EC has a lower write performance than RF since additional computational overhead exists when encoding and decoding the distributed data fragments. However, using an EC storage policy offers advantages such as storage efficiency, given that the overhead for data redundancy is distributed across the fragments, allowing for higher overall storage capacity.

Optimizing performance, automatically

In modern cloud workloads, it is hard to determine and ensure that a consistent object size gets used. Every workflow, workload, and application set differs in terms of the average size of objects. Furthermore, Managed Service Providers (MSP) and Cloud Storage Providers (CSP) often lack visibility into their customer’s applications and workflows, nor do they have a way to know the object sizes their tenant customers are using. Thus, the storage layer needs to become more intelligent and application aware.

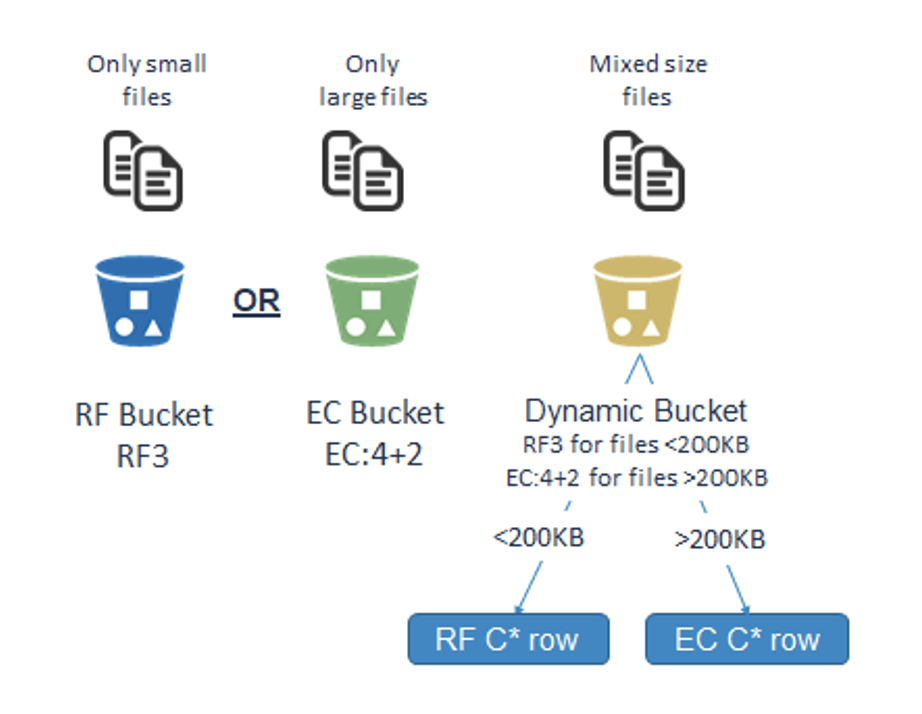

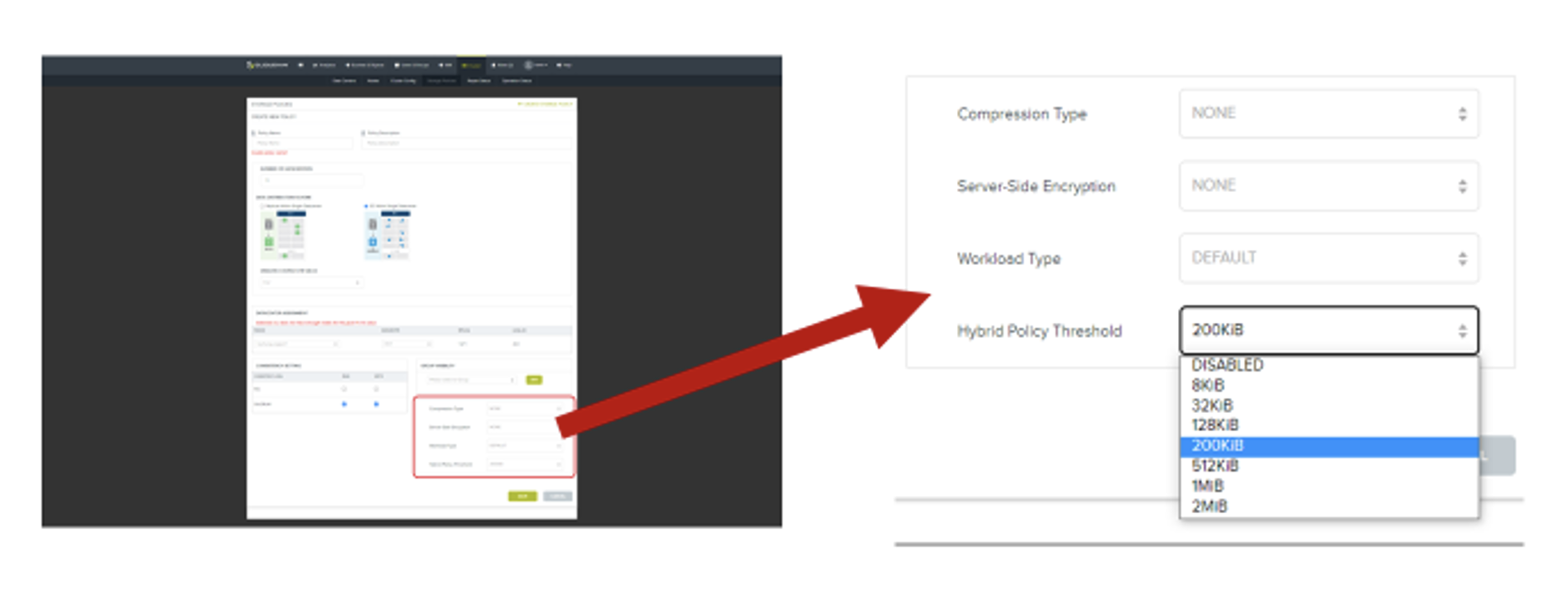

With the HS 7.5.1 release, Cloudian now supports configuring hybrid storage policies. With this feature, HyperStore becomes more application-aware storage with intelligent functionality by creating a new storage policy on a bucket that will detect the size of the object and, depending on its size, will automatically place the object in a replica (RF) configured bucket if it is less than a configured threshold or in an erasure coding (EC) configured bucket if the size of the object is greater than the configured threshold. By default, the threshold is 200 KiB. Hybrid policies also work in multi-DC scenarios using distributed EC deployments, where replication factor (rather than EC) is applied across DCs for smaller-size objects. This hybrid storage policy feature allows for a “best of both” storage strategy that enables the ability to realize the best performance for smaller-size objects and the best storage efficiency for larger-size objects.

With the HS 7.5.1 release, Cloudian now supports configuring hybrid storage policies. With this feature, HyperStore becomes more application-aware storage with intelligent functionality by creating a new storage policy on a bucket that will detect the size of the object and, depending on its size, will automatically place the object in a replica (RF) configured bucket if it is less than a configured threshold or in an erasure coding (EC) configured bucket if the size of the object is greater than the configured threshold. By default, the threshold is 200 KiB. Hybrid policies also work in multi-DC scenarios using distributed EC deployments, where replication factor (rather than EC) is applied across DCs for smaller-size objects. This hybrid storage policy feature allows for a “best of both” storage strategy that enables the ability to realize the best performance for smaller-size objects and the best storage efficiency for larger-size objects.

Glenn Haley, Senior Director of Product Management, Cloudian

View LinkedIn Profile