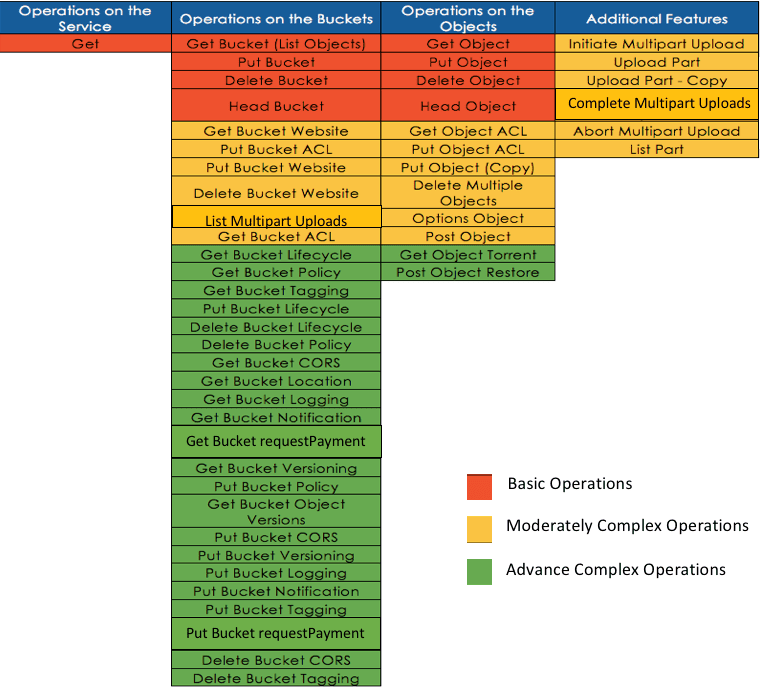

As the Storage Administrator or a Data Protection Specialist in your data center, you are likely looking for some alternative storage solution to help store all your big data growth needs. And with all that’s been reported by Amazon (stellar growth, strong quarterly earnings report), I am pretty sure their Simple Storage Service (S3) is on your radar. S3 is a secure, highly durable and highly scalable cloud storage solution that is also very robust. Here’s an API view of what you can do with S3:

As a user or developer, you can securely manage and access your bucket and your data, anytime and anywhere in the world where you have web access. As a storage administrator, you can easily manage and provision storage to any group and any user on always-on, highly scalable cloud storage. So if you are convinced that you want to explore S3 as a cloud storage solution, Cloudian HyperStore should be on your radar as well. I believe a solution that is easy to deploy and use helps accelerates the adoption of the technology. Here’s what you will need to deploy your own cloud storage solution:

- Cloudian’s HyperStore Software – Free Community Edition

- Recommended minimum hardware configuration

- Intel-compatible hardware

- Processor: 1 CPU, 8 cores, 2.4GHz

- Memory: 32GB

- Disk: 12 x 2TB HDD, 2 x 250GB HDD (12 drives for data, 2 drives for OS/Metadata)

- RAID: RAID-1 recommended for the OS/Metadata, JBOD for the Data Drives

- Network: 1x1GbE Port

You can install a single Cloudian HyperStore node for non-production purposes, but it is best practice to deploy a minimum 3-node HyperStore cluster so that you can use logical storage policies (replication and erasure coding) to ensure your S3 cloud storage is highly available in your production cluster. It is also recommended to use physical servers for production environments.

Here are the steps to set up a 3-node Cloudian HyperStore S3 Cluster:

- Use the Cloudian HyperStore Community Edition ISO for OS installation on all 3 nodes. This will install CentOS 6.7 on your new servers.

- Log on to your servers

- The default root password is password (Update your root access for production environments)

- Under /root, there are 2 Cloudian directories:

- CloudianTools

- configure_appliance.sh allows you to perform the following tasks:

- Change the default root password

- Change time zone

- Configure network

- Format and mount available disks for Cloudian S3 data storage

- Available disks that were automatically formatted and mounted during the ISO install for S3 storage will look similar to the following /cloudian1 mount:

- Available disks that were automatically formatted and mounted during the ISO install for S3 storage will look similar to the following /cloudian1 mount:

- configure_appliance.sh allows you to perform the following tasks:

- CloudianPackages

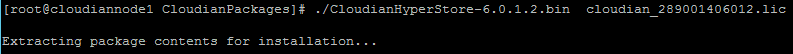

- Run ./CloudianHyperStore-6.0.1.2.bin cloudian_xxxxxxxxxxxx.lic to extract the package content from one of your nodes. This will be the Puppet master node.

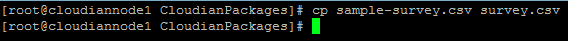

- Copy sample-survey.csv survey.csv

- Edit the survey.csv file

In the survey.csv file, specify the region, the node name(s), IP address(s), DC, and RAC of your Cloudian HyperStore S3 Cluster.NOTE: You can specify an additional NIC on your x86 servers for internal cluster communication.

- Run ./cloudianInstall.sh and select “Install Cloudian HyperStore”. When prompted, input the survey.csv file name. Continue with the setup.

NOTE: If deploying in a non-production environment, it is possible that your servers (virtual/physical) may not have the minimum resources or a DNS server. You can run your install with ./cloudianInstall.sh dnsmasq force. Cloudian HyperStore includes an open source domain resolution utility to resolve all HyperStore service endpoints. - v. In the following screenshot, the information that we had provided in the survey.csv file is used in the Cloudian HyperStore cluster configuration. In this non-production setup, I am also using a DNS server for domain name resolution with my virtual environment.

- Your Cloudian HyperStore S3 Cloud Storage is now up and running.

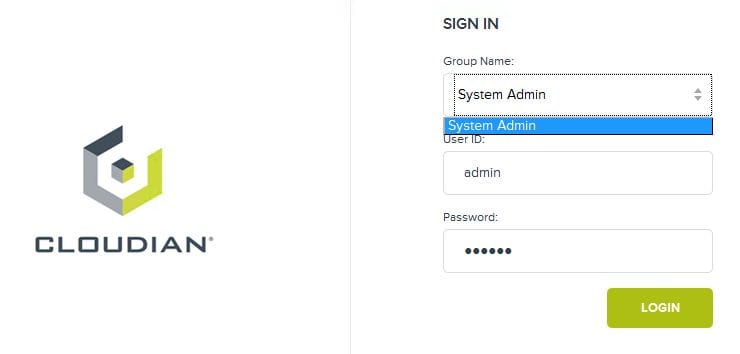

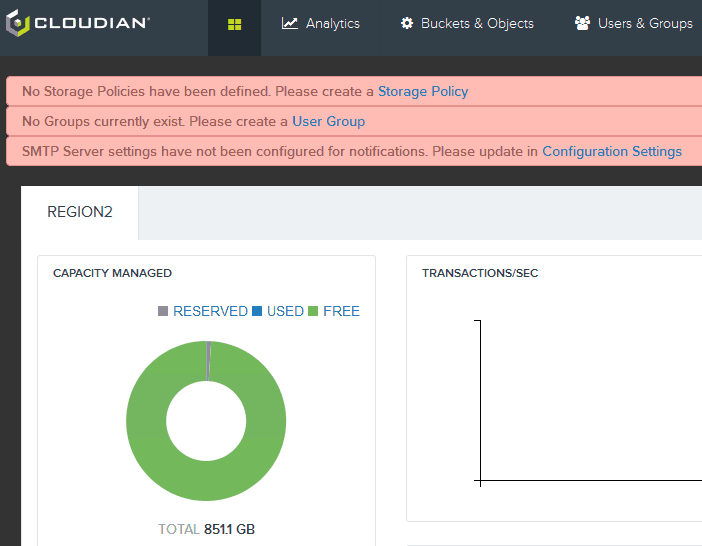

- Access your Cloudian Management Console. The default System Admin group user ID is admin and the default password is public.

- Complete the Storage Policies, Group, and SMTP settings.

- Run ./CloudianHyperStore-6.0.1.2.bin cloudian_xxxxxxxxxxxx.lic to extract the package content from one of your nodes. This will be the Puppet master node.

- CloudianTools

Congratulations! You have successfully deployed a 3-node Cloudian HyperStore S3 Cluster.