As media organizations look for new ways to monetize their ever-growing content archives, they need to ask themselves whether they have the right storage foundation. In a recent article for Post Magazine, the advantages of object storage over LTO tape when it comes to managing and protecting content was discussed. Below is a reprint of the article.

Object Storage: Better Monetizing Content by Transitioning from Tape

Media and entertainment companies derive significant recurring revenue through old content. From traditional television syndication to YouTube uploads, this content can be distributed and monetized in several different ways. Many M&E companies, particularly broadcasters, store their content in decades-old LTO tape libraries. With years of material, including thousands of episodes and millions of digital assets, these tape libraries can grow so large that they become unmanageable. Deployments can easily reach several petabytes of data and may sprawl across multiple floors in a broadcaster’s media storage facility. Searching these massive libraries and retrieving specific content can be a cumbersome, time-consuming task –like trying to find a needle in a haystack.

Object storage provides a far simpler, more efficient and cost-effective way for broadcasters to manage their old video content. With limitless scalability, object storage can easily grow to support petabytes of data without occupying a large physical footprint. Moreover, the technology supports rich, customizable metadata, making it easier and quicker to search and retrieve content. Organizations can use a Google-like search tool to immediately retrieve assets, ensuring that they have access to all existing content, no matter how old or obscure, and can readily monetize that content.

Here’s a deeper look at how the two formats compare in searchability, data access, scalability and management.

Searchability and data access

LTO tape was created to store static data for the long haul. Accessing, locating and retrieving this data was always an afterthought. In the most efficient tape libraries today, staff may be able to find a piece of media within a couple minutes. But even in this scenario, if there were multiple jobs queued up first in the library, finding that asset could take hours. And this is assuming that the tape that contains the asset is stored in the library and in good condition (i.e., it can be read and doesn’t suffer from a jam).

This also assumes the staff has the proper records to even find the asset. Because of the limitations of the format, LTO tape files do not support detailed metadata. This means that organizations can only search for assets using basic file attributes, such as date created or title. It’s impossible to conduct any sort of an ad hoc search. If a system’s data index doesn’t contain the file attributes that a user is looking for, the only option is to look manually, an untenable task for most M&E organizations that have massive content libraries. This won’t change in the future, as tape cannot support advanced technologies such as artificial intelligence (AI) and machine learning (ML) to improve searchability.

On the other hand, object storage makes it possible to immediately search and access assets. The architecture supports fully-customizable metadata, allowing staff to attach any attributes they want to any asset, no matter how specific. For example, a news broadcast could have metadata identifying the anchors or describing the type of stories covered. When trying to find an asset, a user can search for any of those attributes and rapidly retrieve it. This makes it much easier to find old or existing content and use it for new monetization opportunities, driving much greater return on investment (ROI) from that content. This value will only increase as AI and ML, which are both fully supported in object storage systems, provide new ways to analyze and leverage data (e.g., facial recognition, speech recognition and action analysis), increasing opportunities to monetize archival content.

Scalability and management

Organizations must commit significant staff and resources to manage and grow an LTO tape library. Due to their physical complexity, these libraries can be difficult and expensive to scale. In the age of streaming, broadcasters are increasing their content at breakneck speed. And with the adoption of capacity-intensive formats like 4K, 8K and 360/VR, more data is being created for each piece of content. Just several hundred hours of video in these advanced formats can easily reach a petabyte in size. In LTO environments, the only way to increase capacity is to add more tapes, which is particularly difficult if there are no available library slots. When that’s the case, the only choice is to add another library. Many M&E companies’ tape libraries already stretch across several floors, leaving little room for expansion, especially because new content (in higher resolution formats) tends to use larger data quantities than older content.

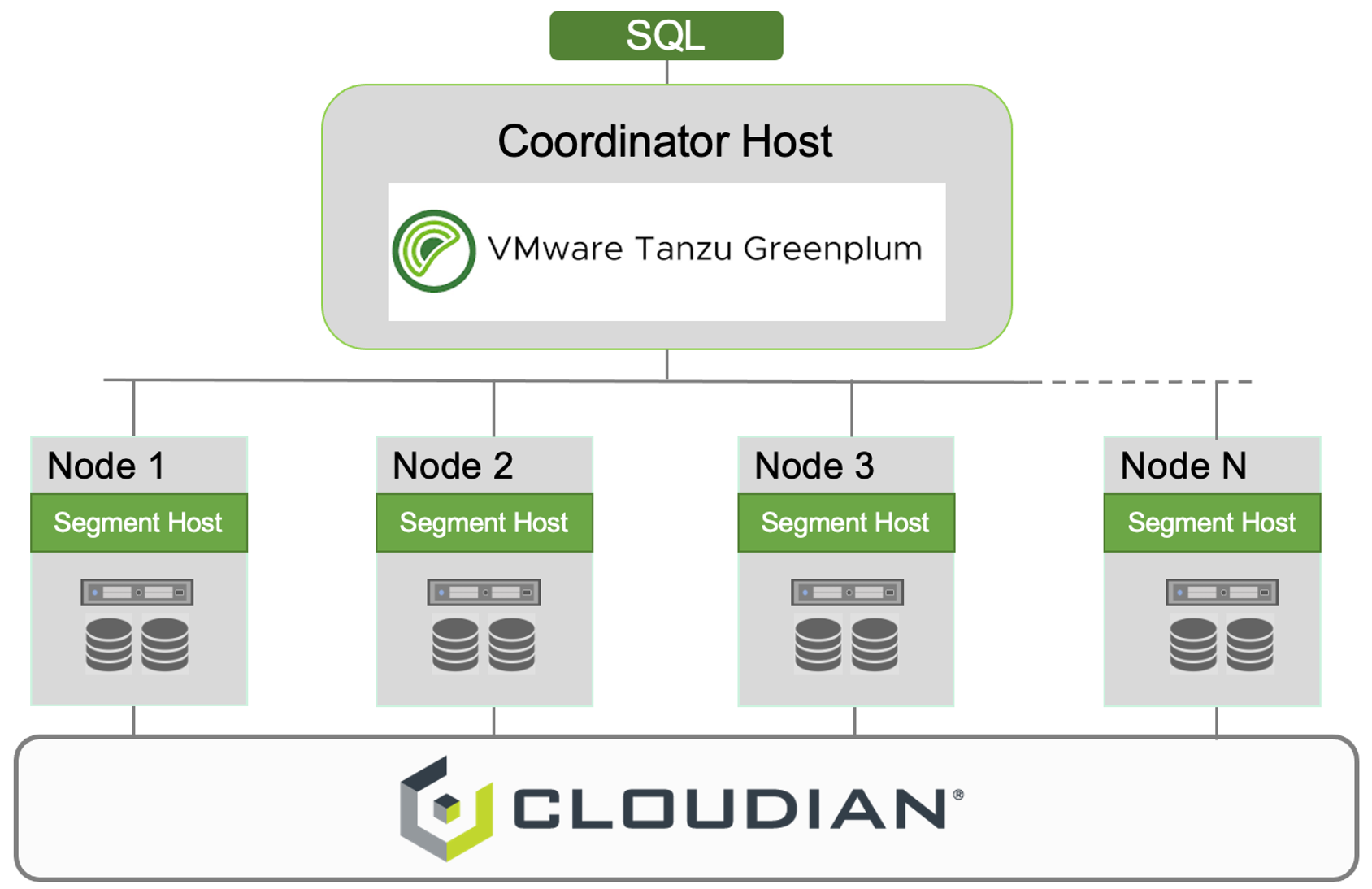

Object storage was designed for limitless scalability. It treats data as objects that are stored in a flat address space, which makes it easy to grow deployments via horizontal scaling (or scaling out) rather than vertical scaling (scaling up). To increase a deployment, organizations simply have to add more nodes or devices to their existing system, rather than adding new systems (such as LTO libraries) entirely. Because of this, object storage is simple to scale to hundreds of petabytes and beyond. With data continuing to grow exponentially, especially for video content, being able to scale easily and efficiently helps M&E companies maintain order and visibility over their content, enabling them to easily find and leverage those assets for new opportunities. Increasing the size of a sprawling, messy tape library is exactly the opposite.

Tape libraries also lack centralized management across locations. To access or manage a given asset, a user has to be near the library where it’s physically stored. For M&E organizations that have tape archives in multiple locations, this causes logistical issues, as each separate archive must be managed individually. As a result, companies often need to hire multiple administrators to operate each archive, driving up costs and causing operational siloing.

Object storage addresses the challenge of geo-distribution with centralized, universal management capabilities. Because the architecture leverages a global namespace and connects all nodes together in a single storage pool, assets can be accessed and managed from any location. While companies can only access data stored on tape directly through a physical copy, object storage enables them to access all content regardless of where it is physically stored. One person can administer an entire globally-distributed deployment, enforcing policies, creating backup copies, provisioning new users and executing other key tasks for the whole organization.

Conclusion

M&E companies still managing video content in LTO tape libraries suffer from major inefficiencies, and in turn, lost revenue. The format simply wasn’t designed for the modern media landscape. Object storage is a much newer architecture that was built to accommodate massive data volumes in the digital age. Object storage’s searchability, accessibility, scalability and centralized management helps broadcasters boost ROI from existing content.

To learn more about Cloudian’s Media and Entertainment solutions, visit cloudian.com/solutions/media-and-entertainment/.

Amit Rawlani, Director of Solutions & Technology Alliances, Cloudian

Amit Rawlani, Director of Solutions & Technology Alliances, Cloudian